Key Takeaways

AI has become an undeniable force for organizations today, and HR is no exception. In many ways, People teams are feeling the impact first as expectations, processes, and technology are all shifting at once.

With that level of change, it’s natural for concerns to surface. Between headlines, opinions, and conflicting information, it can be difficult for HR leaders to separate what’s fact from fiction — especially when you know AI can be valuable but aren’t sure how to implement it safely.

In a recent webinar, Tilt CTO Lisa Zimmerman, Tilt’s Head of IT and Security Brian Nolan, Product Marketing Manager Hollis Baker, and Descript’s Bernard Coleman discussed the real concerns HR leaders have about AI in HR and how organizations are beginning to adopt it responsibly. The conversation explored what’s myth versus reality, the role of the Human Checkpoint Model, and practical steps HR teams can start taking today.

Why AI Can Feel Intimidating for HR Leaders

For HR teams, hesitation around AI and HR rarely comes from resistance to innovation, but from responsibility. You manage decisions connected to pay, benefits, leave, and workplace expectations, and introducing new technology into these moments requires careful thought. Recent data reveals that teams are starting to use AI, but aren’t fully bought into its potential:

- 60% of HR leaders cite lack of trust as the top barrier to AI adoption.

- 46% worry about understanding AI’s risks and value.

- 82% of HR teams are already using AI, yet only 30% have received any job-specific training.

Descript’s Bernard Coleman has spent years working at the intersection of people, technology, and security. He explains that the challenge isn’t whether AI can deliver value, but how organizations manage the risks that come with it: “There are incredible productivity gains that you can get from [AI]. But there’s a tension in how AI is used. The implications are vast—from ethics of usage to the risk of employees accidentally sharing personal information…[If something were to happen] the organization [would be] accountable for decisions that were never really made. When employees self-select tools without oversight, the company then inherits the consequences because the company enabled that environment.”

Debunking AI Myths in HR

At the same time, many of the concerns surrounding AI in HR are shaped by misconceptions. To move forward responsibly, it helps to separate the common myths from the reality of how AI can actually support HR teams.

Myth 1: AI will replace HR professionals

One of the most common fears surrounding AI is that it will eventually replace HR roles altogether. In practice, AI is far better suited to handling repetitive administrative work like documentation, data organization, and answering routine questions, which enables your team to spend more time on the work that requires judgment, empathy, and strategic decision-making.

Myth 2: AI introduces bias

Because HR manages some of the most sensitive information in an organization, concerns about privacy and security are completely understandable. With proper guardrails, AI systems operate within the same security standards and compliance frameworks organizations already use to protect employee data.

Tilt’s Head of IT and Security Brian Nolan explains this dynamic by saying, “Bias in AI for HR typically enters at three levels. There’s data from historical patterns, objectives: what we’re choosing to optimize, and context: how the AI affects decisions….AI rarely invents bias. It tends to codify and amplify bias patterns that already exist in processing data.”

Myth 3: Automation leads to more errors

Teams may be concerned that introducing automation will create more mistakes in sensitive processes like payroll, leave management, or employee documentation. In reality, AI is often most effective at handling repetitive administrative tasks consistently, reducing manual errors and freeing HR teams to focus on the review, judgment, and employee support that require human attention.

Myth 4: AI means losing control

Another common concern is that introducing AI means giving up oversight of important HR processes. In practice, responsible AI adoption is designed to strengthen visibility. These systems can surface information, flag potential issues, and support decision-making all while HR retains full authority in making final decisions.

Myth 5: AI erodes company culture

Because HR plays a central role in shaping culture, many leaders worry that introducing AI will make employee interactions feel impersonal. When used thoughtfully, however, it can reduce the administrative burden that often pulls HR away from people-focused work, creating more space for meaningful conversations, guidance, and support during important employee moments.

The Human Checkpoint Model

The Human Checkpoint Model provides a practical structure for introducing AI responsibly. Within this framework, AI gathers and organizes information while humans review decisions that require context, empathy, or judgment.

Tilt CTO Lisa Zimmerman summarized the importance of this structure by saying, “Responsible AI isn’t just about the technology and what it can do. It’s about the structure that organizations build around it. It’s the checkpoints, the oversight, the accountability, and all of that is the HR function and where the human will continue to stay in the model.”

By defining these checkpoints, organizations ensure that AI supports HR work without removing accountability from the people responsible for employee outcomes.

How HR Leaders Can Begin Adopting AI Today

As HR teams begin separating fact from fiction around AI in HR, another question quickly follows: where do you actually start? The good news is that adopting AI doesn’t require an overnight transformation. Instead, many HR leaders are beginning with small, practical steps that allow them to introduce AI safely and confidently.

- Start small with low-risk workflows: Begin with tasks like documentation, routing information, or answering common employee questions to build confidence.

- Partner early with your team: Bring IT, legal, and leadership into the conversation early. This will ensure considerations around governance and compliance are aligned.

- Keep humans in the loop: Design workflows where HR leaders remain the final decision makers while AI supports the coordination behind those decisions.

Starting small creates space for experimentation without introducing unnecessary risk. Coleman reflected on this approach by explaining,“Start with low risk use cases to build confidence. For so many years as an HR professional, there’s things that I wanted, but it couldn’t happen. But now you can create those possibilities and do those experiments using [whatever tool you want]. Start small and see what you always wish could be, and then just do it.”

A Practical Path Forward

HR leaders are uniquely positioned to create meaningful impact when they adopt AI responsibly. By starting small and focusing on low-risk use cases, teams can begin experiencing the benefits of AI while maintaining the trust, oversight, and compliance their role requires.

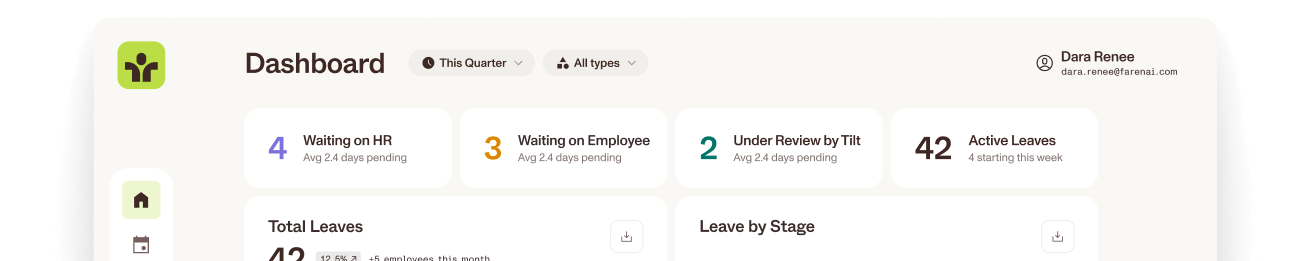

At Tilt, we’re continuing to introduce AI-powered capabilities designed specifically for HR teams managing leave. Leave management is one of the most time-consuming and complex parts of the role, and having the right technology in place can help lighten the lift. If you’re looking for a better way to manage leave while keeping employees at the center of the leave journey, schedule time with our team to see how Tilt can help.