On most days, HR leaders are balancing several responsibilities. You’re answering employee questions, reviewing policies, and making decisions that often affect someone’s pay, job, or wellbeing.

Simultaneously, AI is quickly becoming part of how this work gets done. New tools promise faster answers and less manual work, vendors are adding automation to existing systems, and HR teams are being asked to move faster without adding headcount.

The reality? Some HR teams aren’t prepared for AI. Not because they lack interest or capability, but because the structure around AI hasn’t caught up with the speed of adoption. Tools are introduced before policies exist, decisions are influenced by automation before ownership is defined, and data is shared before clear boundaries are in place. This gap between using AI and governing it is the reason many teams don’t feel confident yet, even when they can see the value AI could bring.

The Governance Gap That Keeps HR From Feeling Ready

New tools can be useful, but without clear rules for how they should be used, who is responsible for decisions, and how outcomes should be reviewed, even small changes can introduce uncertainty. The governance gap appears when automation, vendor features, and AI tools are added faster than policies, ownership, and oversight. When that happens, HR may still be using the tools, but it becomes harder to feel confident in the results.

In practice, teams often realize they don’t have structure in place around things like:

- Documented guidelines for AI

- Clear ownership of AI-supported processes

- Visibility into data access and sharing

- Consistent standards across teams

- Audit trails for decision history

This governance gap usually builds over time as new tools are introduced, processes evolve, and responsibilities shift without a shared framework to guide those decisions. As AI becomes part of everyday workflows, the need for clear governance becomes more apparent. That growing pressure is what leads many organizations to start defining formal guardrails for how AI should be used.

Why the Governance Gap Hits HR Harder Than Other Functions

Every department is exploring AI, but the risks look different in HR. Your systems hold personal data and decisions often affect someone’s pay, benefits, or employment status. When structure is unclear, the impact can affect trust, compliance, and the reputation of the entire organization.

The risks aren’t theoretical. They show up in everyday work such as:

- Sensitive information being entered into prompts

- Managers using tools that were never cleared by key stakeholders

- Permissions set incorrectly

- No documentation recording how a decision was supported

Tilt’s Head of IT and Security Brian Nolan explains why human oversight matters in these moments. He says, “From a governance standpoint, the human checkpoint for decisions is really what creates defensibility when you can clearly show who reviewed a decision, what information was considered, where the AI supported, but did not replace human judgment. You reduce legal exposure and you strengthen compliance… Documenting human oversight isn’t just good practice, it’s really essential risk management.”

When decisions affect employees directly, the need for oversight becomes even more important in the moments where HR workflows carry legal, financial, or personal impact.

Why Sensitive HR Workflows Require Clear Guardrails

The need for governance becomes even clearer in moments where HR decisions carry emotional or legal weight. Performance conversations, accommodations, investigations, and pay changes all require context and clear documentation. AI can support those workflows, but it can’t replace the responsibility HR holds for the outcome.

Tilt’s CTO Lisa Zimmerman explains the difference between automation that runs on its own and automation designed with accountability. “Unchecked automation is where a process just runs end to end and there’s nobody held accountable to it. There’s nobody who’s looking at it and making decisions along the way. It’s just a process—it just runs and finishes. [On the other hand], governed AI is when you have a process that’s transparent, that can be audited and is designed with review points. You can trace a decision back to its source, you can see who set the rules, and what data was used. You want to have review points so that you have a process that’s designed with deliberate moments where a human can intervene, can ask questions, and can override if necessary.”

When workflows include those review points, HR can use automation while still maintaining control over the outcome. That level of visibility is what allows teams to move forward with AI in a way that stays consistent with policy, compliance, and responsibility.

What Strong Governance Actually Looks Like

Strong governance doesn’t slow teams down, but gives them the clarity needed to move faster without creating risk. A well-governed approach to AI for HR usually includes:

- Documented policies for AI use

- Approved/disapproved tools and vendors

- Defined data handling rules

- Role-based permissions

- Audit logs and traceability

- Clear escalation paths

When these guardrails exist, HR can introduce new tools with confidence because everyone understands how decisions are made and who’s responsible for them.

Where To Start

Closing the governance gap doesn’t require a full transformation on day one. It starts with visibility and shared ownership across teams like HR, IT, security, and leadership.

Taking a few deliberate steps early can help create the structure needed to expand AI use with confidence. Practical first steps can include:

- Inventory the AI tools already in use

Start by identifying where AI is already showing up across HR, managers, and vendors so you understand what decisions may already be influenced by automation. - Review vendor data policies

Take time to confirm how employee data is stored, processed, and shared so you know exactly what information external systems can access. - Define restricted data categories

Clearly document what types of employee information should never be entered into AI tools, especially anything related to PII, investigations, or pay. - Create an approval workflow

Establish a simple process for reviewing new tools before they’re used so adoption stays intentional and aligned with company policy. - Document decision ownership

Make it clear who is responsible for reviewing outcomes when AI is involved so employees and leaders always know where accountability sits. - Partner with teams early

Involving teams like security and IT at the beginning helps HR move faster. This can ensure guardrails are designed before problems need to be fixed.

Descript’s Bernard Coleman explains why this collaboration matters. “You have to work in partnership for the human checkpoint to work because everyone has their roles and responsibilities in making sure that the rules are defined and that it’s well-governed. That framework of policies in place protects employees and maintains company trust.”

Governance works best when HR owns people decisions—and other stakeholders help make those decisions safe.

What Becomes Possible When the Governance Gap Is Closed

When governance is clear, AI becomes easier to adopt. Decisions are easier to explain, processes stay consistent, and new tools can be introduced without uncertainty. HR gains visibility into how work is happening, which makes it easier to guide managers, answer employees, and show leadership that the team is operating with both care and discipline. Instead of feeling like technology is moving faster than the organization can keep up, governance gives HR the structure needed to move forward with clarity, control, and credibility.

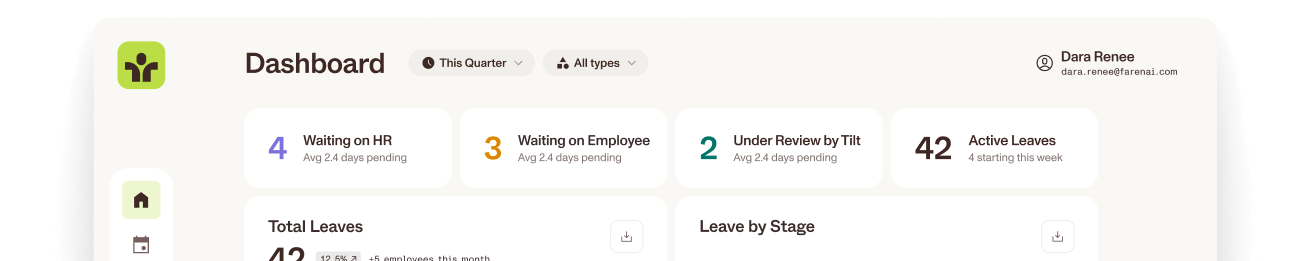

As teams put clear governance in place, it becomes easier to take the next step with AI in workflows that carry the most complexity, including leave management. At Tilt, we’re continuing to build AI-powered features backed by insight from more than 50,000 leave cases, helping HR teams manage leave with more clarity, consistency, and confidence. Tilt’s Leave Experience Management (LXM) platform is designed with clear guardrails so automation supports decisions without taking control away from HR. If you want to see how AI can simplify your leave management experience, click here to meet with a member of our team.

FAQ

What does AI readiness mean in HR?

AI readiness means having governance, policies, and oversight in place so AI in HR can be used safely, consistently, and with clear accountability.

Why do HR teams sometimes struggle with AI adoption?

HR teams may struggle with AI adoption when tools are introduced before policies, approvals, and ownership are defined, creating a governance gap.

Why is governance important for AI in HR?

Governance ensures AI and HR workflows stay compliant, transparent, and fair, protecting employee trust, company culture, and regulatory responsibility.