HR sits at the intersection of employee trust, legal responsibility, and business pressure where even small decisions can have a lasting impact on culture, morale, and risk. Information lives across multiple systems, vendors control pieces of the process, and when something doesn’t line up, HR is the one responsible for making it clear to everyone else.

As AI becomes part of everyday conversations in the workplace, many leaders see the opportunity to reduce manual work and move faster. But the idea of handing sensitive workflows to a tool can create hesitation. When decisions involve personal data, compliance, and moments that shape employee trust, speed alone doesn’t create confidence. You need to know that the systems supporting the work are predictable and safe to use.

That’s where the idea of safe AI becomes important. It means adopting AI in a way that keeps your team in control while allowing the work to move forward with clarity. The foundation for doing that comes down to three pillars: transparency, governance, and human oversight.

Why AI Safety Matters Differently in HR

Every function relies on technology, but HR manages information that carries a different level of sensitivity. Data surrounding PII, compensation, medical leave records, and performance history all sit inside HR systems. If AI interacts with that information, the impact of a mistake can reach far beyond a single workflow.

Tilt’s Head of IT and Security Brain Nolan points out that risk can even come from everyday use of tools that feel harmless in the moment. He explains, “as you look to use tools like ChatGPT, Claude, and Gemini, there’s a difference in the contractual terms between licensed enterprise versions and the free versions that are available to anyone on the internet. So be careful about what sensitive data you put out into the free versions. They might not have the data training and privacy that you expect. ”

This is why safety in HR and AI includes more than security alone. Teams should understand where data goes, how outcomes are generated, and who is responsible for the final decision. Without that visibility, even helpful technology can introduce uncertainty instead of reducing it.

The Three Pillars of Safe AI in HR

Responsible AI adoption becomes much easier to manage when it’s anchored in clear principles. For HR teams, each of these principles plays a different role, and together they create the structure that allows AI to support the work without taking control of it.

Transparency: Knowing How AI Reaches an Outcome

Transparency means HR understands what the AI is doing and why. Your team should be able to see what data is being used, how results are generated, and where information is stored or processed. When that visibility exists, decisions can be explained clearly to employees and leaders.

Nolan often reminds teams that the biggest risks are tied to the data itself. He explains that “bias in AI for HR typically enters at three levels. There’s data from historical patterns, objectives: which are what we’re choosing to optimize, and context: how the AI affects decisions… AI rarely invents bias. It tends to codify and amplify bias patterns that already exist in processing data.”

Understanding those patterns is what makes transparency critical. Practical steps can include:

- Documenting where AI is used

- Reviewing vendor policies

- Keeping audit logs

- Confirming how outputs connect back to inputs

- Involving key partners like IT and legal teams

When the process is visible, HR can stand behind the outcome with confidence.

Governance: Creating Structure HR Can Depend On

Once your team understands how the tool works, the next step is defining how it should be used. Governance provides that structure by setting clear expectations for tools, workflows, and decision rights across the organization.

Without governance, AI adoption often happens in small, disconnected ways. A manager tries a new tool, a vendor adds automation, or a workflow changes without anyone documenting it. Over time, those small changes can make it difficult to explain how decisions were made.

Nolan explains that strong design gives HR more control, not less. “Control doesn’t come from slowing AI down, it comes from designing it well. When roles and decision rights and review checkpoints are clearly defined, AI actually increases transparency. And when you can see how decisions are being made, where exceptions occur, and where intervention is needed, that visibility often gives HR more control than the manual process has ever done.”

Clear governance can include:

- Approved/disapproved tool and vendor lists

- Defined escalation paths

- Guidance on what data can be entered into AI systems

With those guardrails in place, the team can move faster because everyone understands the boundaries.

Human Oversight: Keeping Judgment Where It Belongs

Even with strong transparency and governance, decisions should always stay with people. AI can organize information, summarize policies, and flag risks, but decisions that affect employees require context and judgment that only HR can provide.

Nolan emphasizes that this checkpoint is what creates defensibility. “From a governance standpoint, the human checkpoint for decisions is what creates defensibility when you can clearly show who reviewed a decision, what information was considered, [and] where the AI supported but did not replace human judgment,” he shares. “You reduce legal exposure and you strengthen compliance, especially in areas like leave management where decisions directly affect someone’s livelihood and their wellbeing. Documenting human oversight isn’t just a good practice, it’s really essential risk management.”

Human oversight protects compliance and it also protects culture. Employees trust HR when decisions feel consistent, thoughtful, and fair.

Taking the First Step Toward Safe AI

Safe AI adoption doesn’t start with a full transformation, it starts with clarity. When HR teams understand where AI is used, what data it touches, and when human review is required, it becomes much easier to take your first step. Transparency makes decisions explainable, governance keeps workflows consistent, and human oversight ensures the final call stays where it belongs, so AI can reduce manual work and improve visibility without taking control away from the people responsible for the outcome.

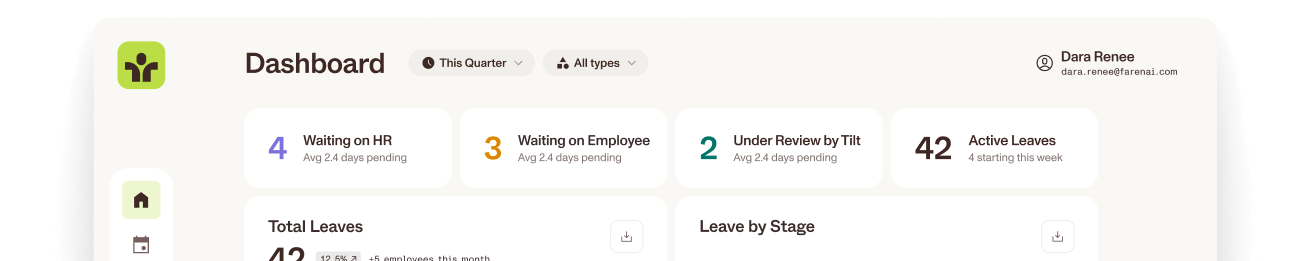

When teams are ready to put these principles into practice, the right technology can make that transition much easier to manage. Tilt’s Leave Experience Management (LXM) platform is designed to give HR full visibility into leave details, clear checkpoints, and the structure needed to stay compliant without slowing the leave experience – and all of this is made possible with the help of AI. With AI supporting the process and HR staying in control, teams can handle leave with confidence while continuing to deliver the level of care employees deserve.